Ambient Voice Technologies (AVTs)

Summary

The rapid adoption of AI scribes in the form of Ambient Voice Technologies (AVTs) and Large Language Models (LLMs) for synthesising and summarising clinical notes presents a dual opportunity for GP trainees who will be our future GP workforce: reducing administrative burnout and a risk of eroding “clinical “muscle memory.”

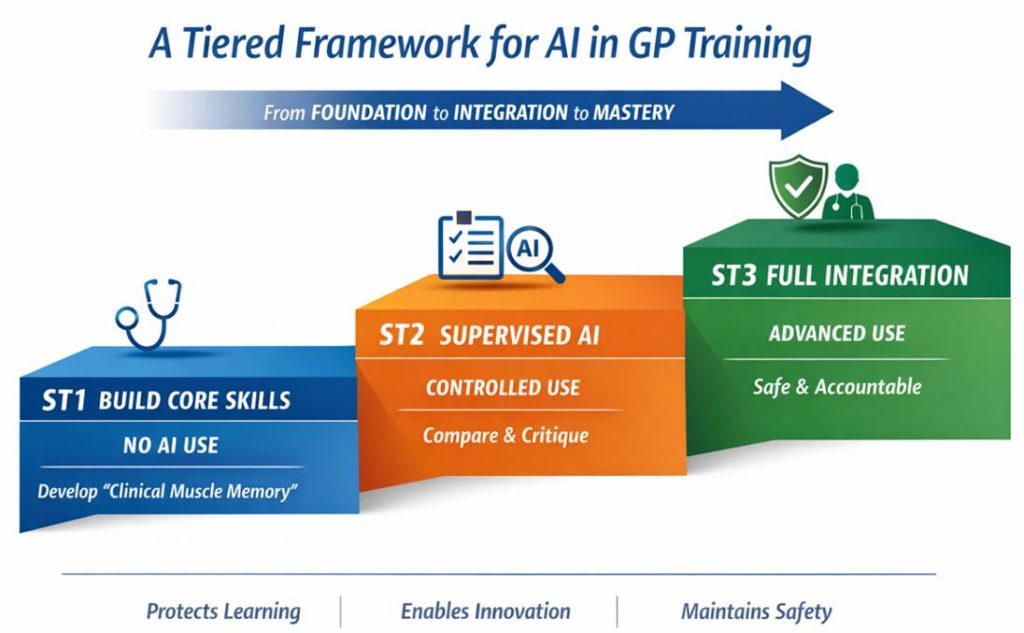

This paper proposes a tiered roadmap to ensure GP trainees develop core consultation and reasoning skills before augmenting them with AI.

Objective

To establish a tiered competency model for the use of LLMs and AVT scribes.

Educational Rationale

The primary risk of early-stage AI adoption in GP training is “Cognitive Offloading.”

If a trainee uses an AI scribe before they have mastered the art of history-taking and clinical synthesis and summarisation, they fail to build the foundational levels of Bloom’s Taxonomy.

By aligning AI access with the trainee’s progression through the taxonomy, we ensure that technology augments rather than replaces clinical intelligence.

Bloom’s Taxonomy

This is a hierarchical framework used by educators to classify learning objectives into levels of complexity and specificity.

Developed in 1956 (and revised in 2001), it rests on the idea that you cannot effectively perform higher-level cognitive tasks (like designing an AI workflow) until you have mastered the foundational ones (like understanding the clinical guidelines).

In the context of GP Training, Bloom’s taxonomy explains why an ST1 shouldn’t jump straight into AI scribing which would lead to fragility in expertise.

Here is an example hierarchy:

| Level | Action | Application to GP Training |

| 1. Remember | Recall facts and basic concepts | Memorising the Red Flags for eg a headache |

| 2. Understand | Explain ideas or concepts | Explaining why a certain medication is contraindicated |

| 3. Apply | Use information in new situations | Conducting a consultation and writing a manual note |

| 4. Analyse | Draw connections among ideas | Comparing an AI-generated note against a manual one to find errors |

| 5. Evaluate | Justify a stand or decision | Critiquing the safety of an AI tool’s summary of a complex patient |

| 6. Create | Produce new or original work | Developing new AI protocols for a PCN or Practice |

The ST-Phased Roadmap for AVT use within live clinical consultations

| Training Year | Taxonomy Level | AI Access Status | Primary Objective |

| ST1 | Remember & Understand | Prohibited | Build “Clinical Muscle Memory” and manual note-taking skills |

| ST2 | Apply & Analyse | Controlled | Develop “Human-in-the-Loop” scepticism and prompt engineering |

| ST3 | Evaluate & Create | Integrated | Master efficiency and ethical oversight for post-CCT life |

Phase I: ST1 – The “Analogue” Foundation

Policy

No use of AI scribes or LLMs for live clinical documentation. Focus on developing their “internal scribe”.

Rationale

To ensure the trainee can identify red flags and confidently complete a concise clinical entry into the electronic medical record independently. Will require ability to listen, summarise and record a patient history whilst maintaining rapport.

Key Activity

Education on mandatory modules on AI Literacy (eg how LLMs work). Trainees learn the mechanics of “hallucinations” and the governance of data privacy (GDPR/DPIA/DCB) without using them on live patients.

Phase 2: ST2 – Analytical Integration

Policy

Supervised use by clinical trainer during tutorials and Consultation Observation Tools (COTs).

Rationale

Trainees begin to distinguish between their own clinical findings and AI-generated summaries, once clinical reasoning is established and confirmed by supervisor.

Key Activity

The “Parallel Note Audit.” Trainees write a note manually, then generate one via AI, and critique the differences. This builds the ability to spot omissions in automated text.

“Prompt Engineering” Learning to use scribes for onward tasks (eg letter summarising).

“Informing patients” Developing proficiency in informing patients of the use of scribe within a consultation.

Phase 3: ST3 – Evaluative Mastery

Policy

Full use of practice-approved, NHS-accredited AI scribes for routine clinics. Preparing for post CCT.

Rationale

At this stage, the trainee has the mastery to appraise the safety of AI-generated plans and design efficient workflows for high-volume General Practice.

Key Activity

Mastery of informing patients. Learning how to explain the “Ambient Listener” to patients while maintaining the confidence of the doctor-patient relationship.

Demonstrate ability to check scribe output comprehensively.

Understanding the liability of generating notes and entering in to the electronic patient record.

Conclusion

In conclusion, the integration of AI into GP training must be deliberately informed, staged, and grounded in educational theory to avoid undermining the development of core clinical competencies.

By aligning access to AI tools such as AVTs and LLMs with progression through Bloom’s Taxonomy, this framework ensures that trainees first build essential skills in history-taking, clinical reasoning, and documentation before augmenting their practice with technology.

The proposed ST-phased roadmap balances the benefits of AI efficiency, reduced administrative burden, and scalability — with the need to preserve clinical “muscle memory” and professional judgement.

Ultimately, this approach positions future GPs not as passive users of AI, but as critical, informed practitioners capable of safely integrating these tools into high-quality patient care.

Dr Osman Bhatti MBBS FRCGP

GP Trainer & Programme Director

Digital Health Lead

Clinical Consultant – Accurx

One response

This is a thoughtful and much-needed contribution — particularly the framing around cognitive offloading and the use of Bloom’s taxonomy, which is exactly the right educational lens for this problem.

The “parallel note audit” in ST2 is especially strong. That is where real clinical judgement is forged — not by avoiding AI, but by learning how and when to challenge it.

Where I think this becomes even more interesting is when we zoom out from training into the system we are actually preparing trainees for.

We are no longer training doctors for a purely human documentation environment. We are training them for an AI-augmented system where:

documentation is medico-legal, not just cognitive

time pressure is extreme

and administrative burden is already distorting clinical work

So there are really two competing risks here:

Cognitive fragility from premature AI reliance

System inefficiency and burnout from not adopting AI fast enough

The NHS is already feeling the second quite acutely.

A couple of areas that might strengthen this further if it were to inform national guidance:

Moving from stage-by-year → stage-by-competence

Not all ST1s are equal, and progression based on demonstrated capability (note quality, clinical reasoning, ability to detect AI error) may be more robust than training year alone

Bringing governance earlier into the model

Patient consent, auditability, and error detection probably need to start at first exposure, not just at ST3

Adding objective metrics

If this is to scale, we’ll need to show:

does AI improve note quality?

does it reduce error or introduce new ones?

what happens to consultation quality and time?

The core principle you’ve outlined — competence before convenience, augmentation not replacement — feels exactly right.

The next step, I suspect, is translating this from an educational framework into an operational one that works at NHS scale.

Curious to see how this evolves — it’s a space that’s moving faster than most training structures are designed to handle.